Why Transcripts Can’t Catch Scams (But AI Fraud Detection Can)

Key Takeaways:

- Fraud is increasingly voice-based, and transcripts can’t reliably identify the acoustic signals and behavioral patterns that can indicate fraud.

- By leveraging AI fraud detection, businesses can analyze audio in real time and catch fraud as it happens.

AI tools can do great things for businesses, but they have a dark side. Hackers, scammers, and fraudsters are now using free AI audio generators to launch more believable attacks at scale. With just a few clicks, they can sound like trusted customers or executives at your company.

Your employees may be more wary of phishing emails, but audio AI attacks are the next frontier for scammers. It’s getting progressively harder to spot these scams in real time, and traditional fraud-prevention systems can’t keep up.

The challenge is that audio fraud isn’t just about what’s being said. It’s about how it’s being said. To keep up, more organizations are investing in AI fraud detection. Learn why traditional safeguards aren’t enough, the cost of getting it wrong, and how AI fraud detection systems spot audio scams in real time.

In this guide:

- What is AI Fraud Detection?

- Traditional Fraud Prevention Doesn’t Cut It

- The Real Cost of Catching Fraud After the Fact

- AI Fraud Detection Catches Scams in Progress

- Fraud Is Evolving. Detection Has to Evolve Faster.

- Frequently Asked Questions

What is AI Fraud Detection?

Essentially, AI fraud detection is the application of AI and machine learning to catch fraud as it unfolds. Rather than relying on rules or humans to detect suspicious behavior, AI fraud detection algorithms look at massive amounts of fraud data. This includes transactional information, behavior data, voice data, device data, etc.

Machine learning techniques can then be leveraged to detect fraud faster than traditional methods, learn from new fraud patterns over time, and identify fraudulent activity that normally goes unnoticed.

Businesses are protecting themselves from scams before they occur with AI fraud detection. Used in many industries such as financial services, insurance, healthcare, ecommerce, telecom, and contact centers, AI can identify suspicious behavior or voice/conversation patterns. If abnormal behavior is detected businesses can set up alerts or automated reactions to prevent financial loss and protect customers and employees from sophisticated fraud.

Traditional Fraud Prevention Doesn’t Cut It

Traditional fraud prevention was designed for text-based attacks. These systems can still spot low-hanging fruit, relying on data from:

- Call metadata

- Post-call analysis

- Keyword detection

These tools aren’t useless, but they can’t do proper AI fraud detection. That’s because fraud isn’t primarily a text problem. It’s an audio problem.

Fortunately, deception leaves acoustic fingerprints like:

- Scripted responses that sound just slightly too structured

- Mismatched emotional tone and language

- Hesitations or unnatural pacing

- Elevated stress patterns

- Background noise that suggests coordination or coaching

These signals won’t appear in metadata or a transcription, and humans can’t reliably catch them. If your fraud detection system doesn’t account for these signals, you’re only listening to half the conversation.

The Real Cost of Catching Fraud After the Fact

Anywhere customers verify identity over the phone, there’s an opportunity for voice-based social engineering or synthetic impersonation. Voice-cloned AI reps have tricked executives into urgent wire transfers. Contact center phone spoofers impersonate legitimate customers to change passwords and account information.

Banks, retailers, and insurance are just some of the many industries hit hard by voice fraud. Post-call detection can help you reverse some losses, but it’s nothing more than damage control.

Even if you identify fraud after the call ends, you’ll still see problems like:

- Unauthorized or illegal funds transfers

- Changed account details

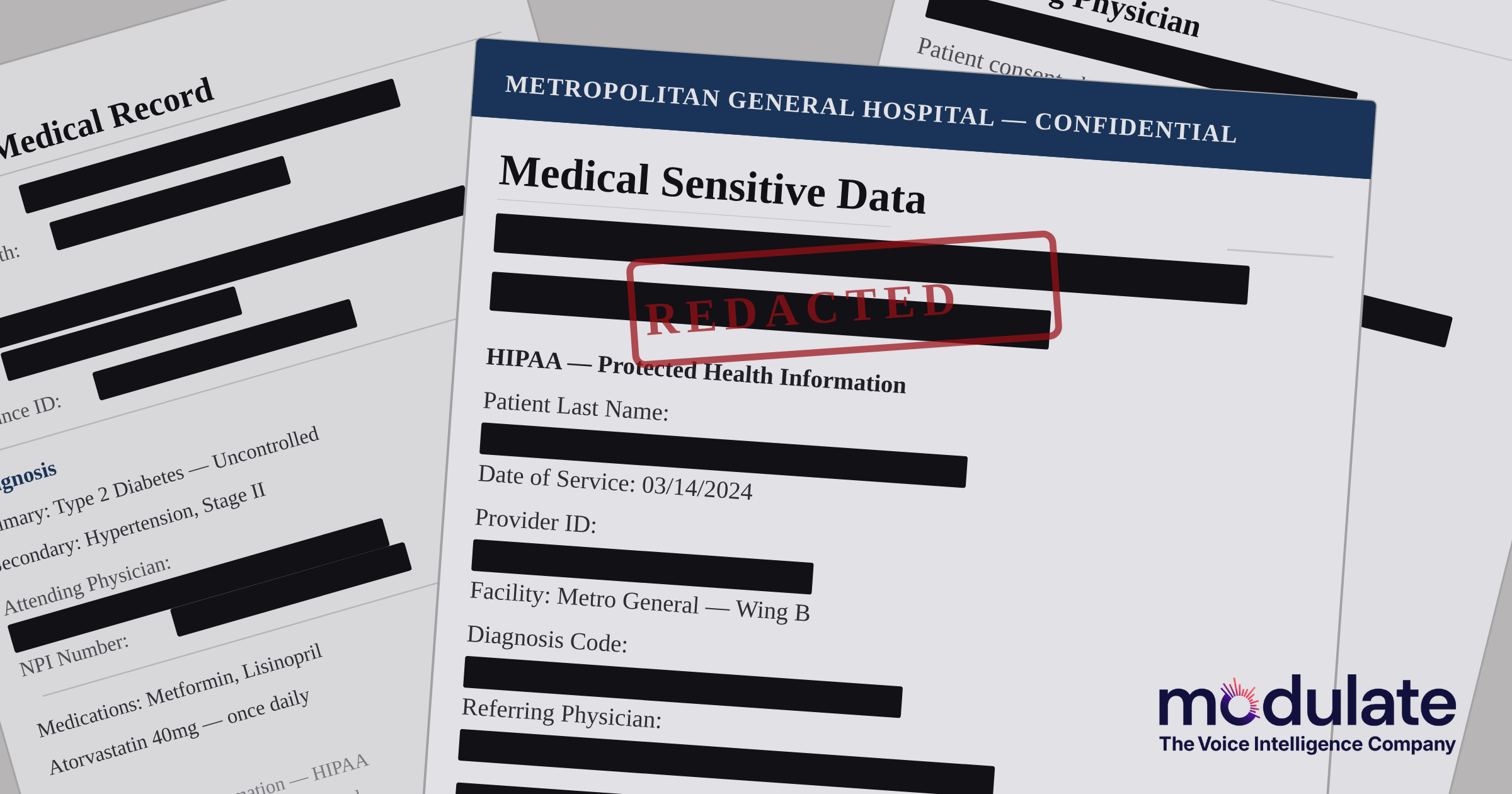

- Exposed sensitive data

- Internal and external investigations

- Regulatory audits or fines

- Reduced customer trust

Fraud is a headache, but it compounds at scale. Failing to catch fraudsters in the act is costing you dearly, which is why businesses are embracing voice AI fraud detection to beat scammers at their own game.

AI Fraud Detection Catches Scams in Progress

If fraud is an audio problem, detection has to be audio-native. Traditional AI fraud systems analyze transcripts, but real-time voice fraud detection analyzes the voice itself as the conversation unfolds. This technology analyzes what’s said, as well as how it’s said.

Voice-native AI models, like Modulate’s Velma, treat speech as structured behavioral data. It can detect acoustic red flags in milliseconds, including stress markers and artifacts in AI-generated voices.

Of course, you don’t want to get it wrong and accidentally flag a legitimate conversation as a scam or fraud. Modulate has a fix for that, too.

Modulate’s voice intelligence models analyze emotional signals and conversational behavior to explain why it flagged the conversation as potentially fraudulent. That transparency allows agents and fraud teams to act confidently and not just react to a vague risk score.

Fraud Is Evolving. Detection Has to Evolve Faster.

AI fraud detection can’t replace human judgment. But if your business handles a lot of phone calls or audio media, it’s a smart tool for detecting deception that would otherwise go unnoticed, especially for large enterprises with many conversations.

Since voice cloning tools and synthetic speech systems are so easy to access, you need a way to automatically spot deceptive intent beyond transcripts. Modulate’s real-time voice intelligence takes a voice-first approach to protecting both you and your customers. See how Modulate’s VoiceVault detects deception in real time and helps teams stop voice fraud before damage is done.

Frequently Asked Questions

Does real-time voice analysis raise privacy concerns?

Not necessarily. Any AI system that analyzes customer interactions must comply with strict data protection requirements. As long as you follow these requirements, real-time voice analysis shouldn’t pose additional issues.

Can voice AI adapt to different languages and accents?

Some models can. Look for advanced, voice-native models trained on large or diverse datasets. This increases the likelihood that your model can handle accents and diverse speech patterns.

How does voice fraud detection reduce false positives?

The rigid rules and keyword triggers in traditional fraud systems can trigger a lot of false positives. Voice intelligence models reduce them by taking a more nuanced approach. Instead of relying on a single red flag, it looks for multiple acoustic and emotional signals to calculate the conversation’s overall risk level.

Can AI detect voice cloning scams?

Yes. More advanced voice fraud detection systems can identify acoustic artifacts, unnatural timing patterns, and emotional tells that many synthetic voices aren’t programmed to reproduce. If a fraudster uses a cloned voice or AI-generated speech to try and trick a customer service agent, these models can flag it as fraudulent even if the imitation sounds perfect to the human ear.